Fresh GSA SER Target Link Lists Secrets for Better LPM

GSA SER Link Lists

Understanding the Role of GSA SER Link Lists in Modern SEO

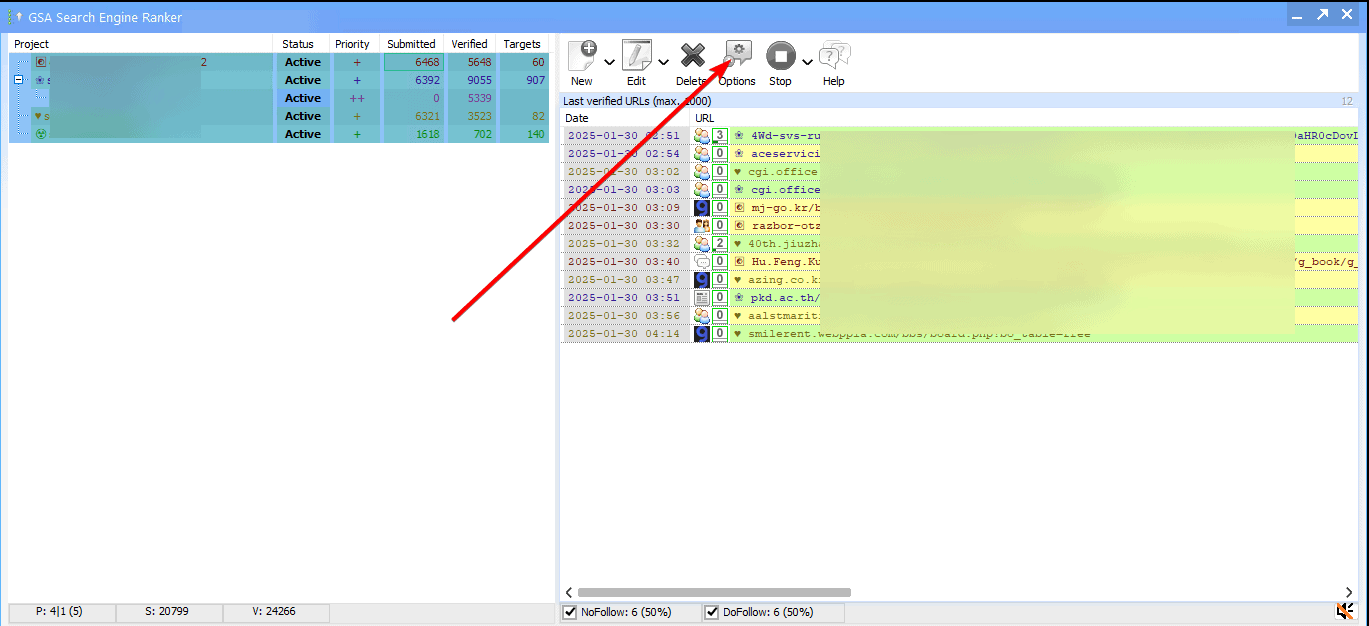

Building a diverse and robust backlink profile remains a cornerstone of search engine optimization. Among the arsenal of tools available to experienced SEOs, GSA Search Engine Ranker stands out for its sheer automation power. At the heart of every successful GSA SER campaign lies a critical component that can make or break your results: GSA SER link lists. These lists are not mere collections of URLs; they are carefully curated target sets that define where your automated backlinks will land. Without high-quality, filtered lists, the tool’s blistering speed can quickly become a liability rather than an asset.

What Exactly Are GSA SER Link Lists?

In the context of the software, GSA SER link lists are imported text files containing target URLs that the ranker will try to register accounts on and post content. These can be lists of blog comment pages, guestbook entries, forum profiles, or any other platform type that GSA SER supports. The software uses these lists to handle identification, registration, and submission automatically. The quality, freshness, and niche relevance of these URLs directly dictate the survival rate of your links and the overall authority passed to your money site.

Why Generic Lists Are a Dangerous Shortcut

Many beginners make the mistake of downloading free, widely circulated GSA SER link lists from public forums. While tempting, these lists are often saturated. Every other marketer using the same targets means your backlinks will be planted in a dense spam grid, triggering immediate devaluation by search engines. Worse, public lists contain a high percentage of dead domains, login-only platforms, and sites that have already been penalized. Using them leads to massive failed submissions, wasted proxies, and a link graph that does more harm than good.

Key Characteristics of an Effective Link List

A high-performance link list is not a static asset. It requires constant grooming based on platform compatibility, domain authority metrics, and historical success rates. For proper tiered link building, your GSA SER link lists should be segmented according to the tier they service. A Tier 1 list demands high-quality, moderated platforms, while Tier 2 and 3 lists can tolerate faster, more aggressive targets. The best lists are often private, scraped from fresh footprints, and filtered through dedicated verification tools to remove duplicates and dead entries before they ever reach GSA SER.

Essential Filters for Scrubbing Your Targets

To maintain link velocity without destroying campaign health, every imported list must be scrubbed. Critical filters include removing Chinese/Japanese character domains unless specifically targeting those markets, eliminating sites that require Alexa ranks below certain thresholds, and stripping out URLs with a high outbound link count. Applying these filters to your GSA SER link lists ensures that your software spends its time and CPU cycles on hosts that actually accept submissions. This drastically improves the live link ratio.

How to Source and Prepare Link Lists for Maximum Output

Serious practitioners rarely rely on a single list source. They combine harvested content using custom footprint scraping tools like Scrapebox, Gscraper, or the built-in GSA SER search engine scraper. They then run the collected URLs through a verification pipeline. This verification—checking for registration forms, HTML field compatibility, and platform engine types—transforms raw scraped data into actionable GSA SER link lists. Once verified, these targets are sorted by platform (WordPress, Drupal, phpBB, etc.) and loaded into the correct project, allowing for tailored comment scripts and submission cadences.

The Critical Role of Filtering for Tier 1 Tactics

If you are using GSA SER for Tier 1 links—a method reserved for confident users with deep filtering skills—your lists must be exceptionally sterile. Targets must accept contextual content, have minimal spam history, and ideally show genuine traffic. You cannot afford to blast irrelevant links onto high-authority sites with a low tolerance for automation. When curating your GSA SER link lists for Tier 1, manual verification of the top 10% of domains by authority often saves a campaign from catastrophic penalties while preserving the speed advantage of the ranker.

Maintaining Link List Health Over Time

A static list deteriorates rapidly. Domains expire, comments close, and anti-spam measures evolve. Your archive of GSA SER link lists needs a regular maintenance cycle. Successful operators implement a feedback loop: after a project runs, they export the “Verified†and “Submitted†logs, then use those live targets to build subsequent, pre-validated lists. This living database becomes an invaluable proprietary asset that no publicly available list can compete with, compounding the efficiency of every subsequent link building sprint.

Ultimately, the software is only as intelligent as the instructions and targets you feed it. Mastering the art of constructing, verifying, and refreshing GSA SER link lists is what separates automated spam from a mechanized, scalable SEO strategy that actually moves the needle in the GSA SER list subscription search results.